Advertisement

Grab your lab coat. Let's get started

Welcome!

Welcome!

Create an account below to get 6 C&EN articles per month, receive newsletters and more - all free.

It seems this is your first time logging in online. Please enter the following information to continue.

As an ACS member you automatically get access to this site. All we need is few more details to create your reading experience.

Not you? Sign in with a different account.

Not you? Sign in with a different account.

ERROR 1

ERROR 1

ERROR 2

ERROR 2

ERROR 2

ERROR 2

ERROR 2

Password and Confirm password must match.

If you have an ACS member number, please enter it here so we can link this account to your membership. (optional)

ERROR 2

ACS values your privacy. By submitting your information, you are gaining access to C&EN and subscribing to our weekly newsletter. We use the information you provide to make your reading experience better, and we will never sell your data to third party members.

Analytical Chemistry

Smart glasses are back

by Bibiana Campos Seijo

July 31, 2017

| A version of this story appeared in

Volume 95, Issue 31

Virtual or augmented reality and artificial intelligence are terms that are used to death these days. With so many businesses under pressure to keep up with technology, it feels like the means rather than the end is dominating the conversation. Granted, technology is a powerful enabler, so it is important that we spend time talking about the possibilities and challenges it presents.

There are a number of companies pushing the boundaries in this area, such as Google, which has just released the Enterprise Edition of Google Glass. An earlier version of this smart head-mounted display and camera appeared several years ago, but despite the hype and publicity that went with it, the device failed to catch on in the consumer market. Google, however, has identified a new opportunity: the factory floor. After a makeover, Google Glass is being tested in plants around the U.S., including by companies such as Boeing and General Electric.

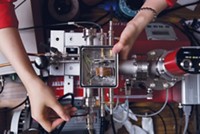

A factory may not be the first place that comes to mind when thinking about cutting-edge technology. But of course, factory workers and engineers often need real-time information while operating or building machinery and products with their hands. Some of the companies participating in the tests had experimented with tablets in the past. But even the most resilient of these devices did not last long enough in typically punishing industrial environments to make a significant difference.

Smart glasses offer a powerful solution. They can be operated hands-free via voice commands and offer a computer display that users can view simply by shifting one’s gaze. Reports in the news media have described the experience as a “lightweight version of augmented reality.”

The makeover of Google Glass also addressed some of the deficiencies that had been identified in the first iteration. The device now has a better camera, a longer battery life—essential for those on eight-hour shifts—and faster Wi-Fi. The company also introduced the Glass Pod, a module that contains the electronics and can be attached and detached from any glasses with compatible frames, meaning it can be inserted into safety goggles or prescription glasses.

The experience of those using Google Glass on the factory floor has so far been very positive, as the devices provide real-time information when needed and reportedly improve not only productivity but also quality. Think about it: limited interruptions to your workflow. No more having to write something down on paper, going to the computer—which may be shared among a group of people—and then typing it up.

For chemists, this technology could be a game changer. Imagine having each step of a reaction directly in front of you and then being guided through each of those steps: what chemicals to use, how much of each, reaction temperatures, and safety data all provided as you go. You could even take pictures or record what you are doing and share it with collaborators at a later date or in real time. It could improve reproducibility (which is of course linked to quality) as well as compliance with guidelines. The device could require the wearer to comply with a protocol that needs signing off as each step is completed.

Other areas where the potential is immense are training and safety. I am also excited about possible interconnectivity between smart glasses and other lab technology, including electronic lab notebooks and machinery, for example, enabling technicians to operate equipment via the glasses.

It’s interesting to see that something that started as technology for technology’s sake is now finding a market on the factory floor, of all places. The forecast is that by 2025, about 14 million U.S. workers will wear smart glasses. They could be coming to a lab near you very soon.

Views expressed on this page are those of the author and not necessarily those of ACS.

Join the conversation

Contact the reporter

Submit a Letter to the Editor for publication

Engage with us on Twitter