Advertisement

Grab your lab coat. Let's get started

Welcome!

Welcome!

Create an account below to get 6 C&EN articles per month, receive newsletters and more - all free.

It seems this is your first time logging in online. Please enter the following information to continue.

As an ACS member you automatically get access to this site. All we need is few more details to create your reading experience.

Not you? Sign in with a different account.

Not you? Sign in with a different account.

ERROR 1

ERROR 1

ERROR 2

ERROR 2

ERROR 2

ERROR 2

ERROR 2

Password and Confirm password must match.

If you have an ACS member number, please enter it here so we can link this account to your membership. (optional)

ERROR 2

ACS values your privacy. By submitting your information, you are gaining access to C&EN and subscribing to our weekly newsletter. We use the information you provide to make your reading experience better, and we will never sell your data to third party members.

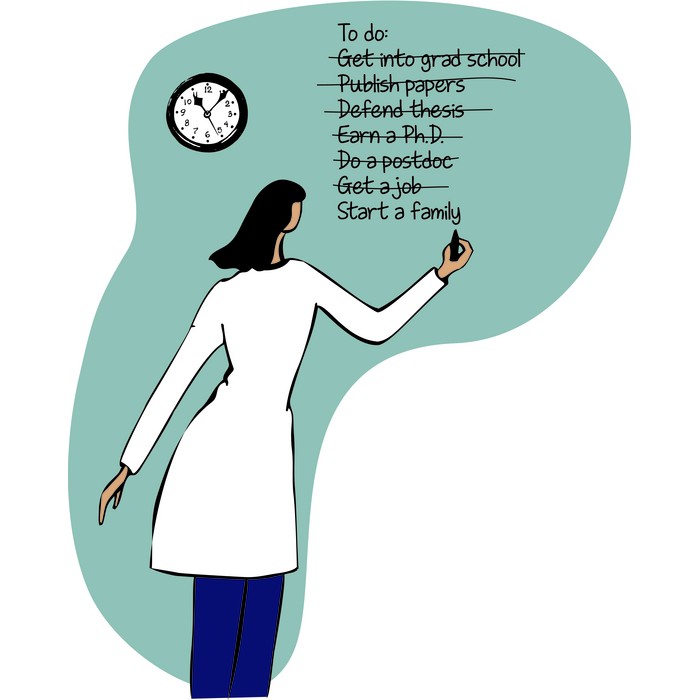

Women In Science

Hiring checklists help but don’t solve faculty’s biases, study says

by Jyllian Kemsley

June 30, 2022

| A version of this story appeared in

Volume 100, Issue 24

The use of rubrics to evaluate job candidates is touted as a way to reduce bias and improve diversity, equity, and inclusion in hiring. But few real-world studies exist to demonstrate that checklists of hiring criteria have the intended results, according to a group of sociologists and engineers at the University of California San Diego. To address that data gap, the researchers evaluated rubric use in four engineering faculty searches at one university that resulted in the hiring of three women and six men (Science 2022, DOI: 10.1126/science.abm2329). They found that women and men were hired in more-equal numbers when a rubric was used, but gender bias was still evident in rubric scoring. Limited data prevented the analysis of rubrics’ impact on hiring fairness related to race, ethnicity, and other aspects of diversity. The researchers concluded that rubrics should include a calibration metric that can be verified independently—such as comparing a candidate’s number of papers to productivity scores given by evaluators—to detect bias in scoring. The researchers also recommend that meetings of evaluators begin with one person presenting results. “By opening with a neutral reading of the full set of both positive and negative rubric comments, the impact was blunted of any first speakers, often senior men, attempting to vociferously promote or shoot down a candidate,” they say in the paper.

Join the conversation

Contact the reporter

Submit a Letter to the Editor for publication

Engage with us on Twitter