Advertisement

Grab your lab coat. Let's get started

Welcome!

Welcome!

Create an account below to get 6 C&EN articles per month, receive newsletters and more - all free.

It seems this is your first time logging in online. Please enter the following information to continue.

As an ACS member you automatically get access to this site. All we need is few more details to create your reading experience.

Not you? Sign in with a different account.

Not you? Sign in with a different account.

ERROR 1

ERROR 1

ERROR 2

ERROR 2

ERROR 2

ERROR 2

ERROR 2

Password and Confirm password must match.

If you have an ACS member number, please enter it here so we can link this account to your membership. (optional)

ERROR 2

ACS values your privacy. By submitting your information, you are gaining access to C&EN and subscribing to our weekly newsletter. We use the information you provide to make your reading experience better, and we will never sell your data to third party members.

Synthesis

Chemists hand off reaction optimization to automated ‘plug and play’ flow system

Reconfigurable machine quickly finds optimal reaction conditions for a variety of reactions

by Tien Nguyen

September 20, 2018

| A version of this story appeared in

Volume 96, Issue 38

Organic chemists optimizing a reaction, like chefs perfecting a dish, execute a single transformation over and over, each time tweaking a specific aspect, such as temperature or ingredient ratio, while observing the change’s effect on the final product. For chemists, whose experimental efforts don’t yield tasty treats, this process is usually tedious and time-consuming. While optimizing the parameters of a known reaction with few reagents might take a graduate student about a week of work, optimizing an unknown reaction, depending on the student’s chemical intuition or luck, may take months.

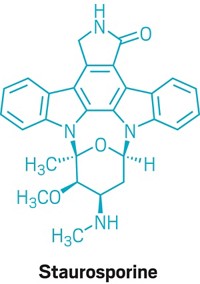

Now, an automated flow system proposes to streamline the optimization process. Developed by an interdisciplinary team of scientists at the Massachusetts Institute of Technology led by Timothy F. Jamison and Klavs F. Jensen, the reconfigurable system lets users find optimal reaction conditions up to an order of magnitude faster than the traditional optimization route, the authors say (Science2018, DOI: 10.1126/science.aat0650). The team suggests that their system can be used for a myriad of reactions and could also help chemists scale up syntheses or construct compound libraries to screen for bioactivity. The goal was to design a system that can take on some of the work of synthesizing molecules so that scientists have more time to think and discuss their science, Jamison says.

On average, the system was able to optimize a reaction in less than a day of continuous flow, with high-throughput liquid chromatography analysis of the crude reaction mixtures being the slowest part of process. However, the compact, suitcase-sized system can be directly connected to a number of different analytical instruments, including faster techniques like mass spectrometry, infrared spectroscopy, and Raman spectroscopy.

Recent advances in automated syntheses in flow have generally focused on specific reactions. Yet the researchers wanted to build a system that would be compatible with many different reactions. The biggest challenge was creating a versatile design that was truly “plug-and-play,” says co-lead author Anne-Catherine Bédard, who was a postdoctoral researcher in the Jamison lab and now works at Dow.

Their solution was a modular design that came with several choices of small, rectangular reactor modules. Selections included reactors to enable heating and cooling, a reactor with light-emitting diodes for photocatalytic reactions, a packed bed reactor for reactions with solid supported catalysts, a liquid-liquid separator module for separating unwanted side products, and a bypass module for mixing reagents. Users could pump up to six reagents from feedstock solutions into their desired reactor module(s) of choice, which they could plug into any of the five loading bays as simply as loading a cartridge into an old-school video game console.

The system interface is very user-friendly, Bédard says. With less than an hour of training, she says, a graduate student who’s never used the system before could independently use the instrument to optimize their reaction. Once the user has reagent feedstocks and reactors in place, they can program upper and lower limits for each reagent and then click a button that optimizes for yield (based on starting material or product traces) throughput and/or selectivity. Then the algorithm takes over.

The so-called SNOBFIT algorithm optimizes reactions differently from a researcher who designs a linear series of experiments using their chemical knowledge. Instead of optimizing each variable one at a time, the software optimizes the reaction as a system, running a handful of reactions with randomized conditions and learning from the results to design the next set of reactions, until it converges on the optimal conditions. Bédard says watching the system run reactions was stressful at first. It may run reactions that seem obviously terrible to an observer, she says, but poor results are valuable information for the algorithm. The system runs around 30 reactions to optimize five variables.

Using their system, the team identified optimized reactions conditions and explored the substrate scope of commonly used reactions, including cross-coupling, olefination, photoredox and nucleophilic aromatic substitution reactions. The researchers also demonstrated the system’s ability to reproduce results by optimizing a reaction in the Jensen lab then transferring it to the Jamison lab to independently obtain the same results.

The researchers also performed the optimization of a known ketene [2+2] cycloaddition (J. Am. Chem. Soc.2013, DOI: 10.1021/ja3103007). The new conditions enabled the team to expand the reaction’s scope to more bulky and highly substituted alkenes, though success required elevated temperatures and about three times the amount of Lewis acid compared to the precedent.

Advertisement

University of Michigan’s Tim Cernak, who previously developed a miniaturized high-throughput synthesis technique at Merck & Co. to explore reaction conditions and protein affinity, says the work is a “tour de force of hardware and software development.” He says “it takes all the popular operations of organic chemistry and reduces them to six robust flow modules that can be swapped in and out of an instrument like apps on your smartphone. Mixing and matching the modules gives more than 15,000 conceivable configurations, so it seems that plenty of creative synthetic workflows will be accommodated.”

“Democratizing synthesis is an accessible moonshot for chemistry,” says University of Illinois, Urbana-Champaign’s Martin D. Burke, whose lab developed a process for the iterative cross-coupling of small molecule building blocks in 2015. “This simple and user-friendly platform will expand access to several highly versatile synthetic reactions and thereby add significantly to the growing momentum in this exciting direction.”

Join the conversation

Contact the reporter

Submit a Letter to the Editor for publication

Engage with us on Twitter